The signal in the noise

Your backlog is not starving for input, it is drowning in it. Surveys, support tickets, social posts, reviews, in‑app notes. This is where AI feedback tools earn their keep. When volume outpaces human attention, AI helps you collect, classify, and act with clarity.

Modern platforms blend NLP, sentiment analysis, auto‑categorization, and conversational agents to turn raw text into product judgment. The aim is not speed for its own sake, it is consistent quality under pressure.

Why AI feedback tools now

- Volume and velocity exceed manual analysis capacity.

- Fragmented sources hide important context.

- Teams need timely, segmented insights, not monthly summaries.

Research across industry shows AI can surface real‑time patterns from support, surveys, and social, cutting analysis cycles while improving accuracy. See practical overviews from the Zendesk team and Thematic for sentiment methods and tradeoffs:

- https://www.zendesk.com/blog/ai-customer-feedback/

- https://getthematic.com/insights/automated-sentiment-analysis

Takeaway: AI is no longer a sidecar. It is the analysis engine.

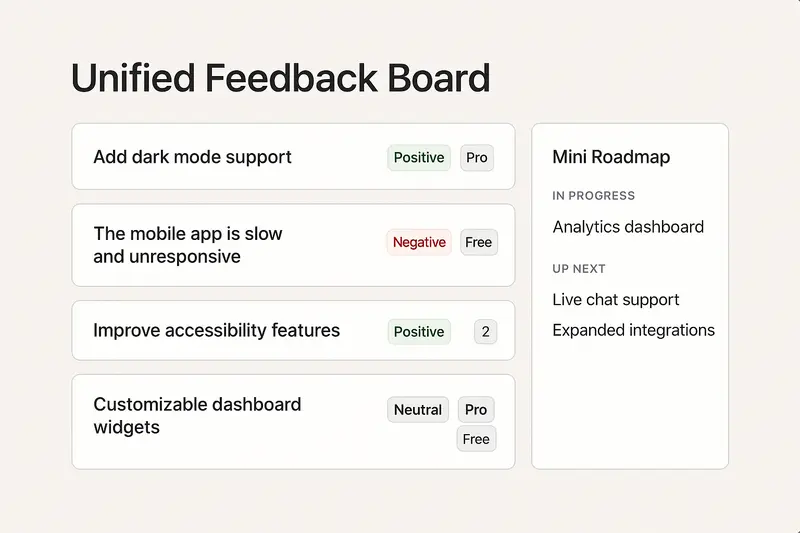

Inside Sleekplan: integrated feedback with Sleek Intelligence

Sleekplan pairs a public or private board, roadmap, and changelog with AI that removes repetitive work. Sleek Intelligence focuses on the parts humans should not babysit:

- Automated collection across channels, pulling feedback that lives in tickets, chats, and email.

- Board hygiene at scale: spam filtering, duplicate detection, and prompts for missing detail.

- Sentiment and urgency signals, so heated issues rise without burying calm but critical ideas.

- Auto‑generated multi‑step surveys based on context, not guesswork.

- A conversational assistant that answers “what are users asking for about onboarding this quarter?” in seconds.

This sits on top of core modules: voting boards, roadmap, changelog, and satisfaction surveys. When a feature ships, voters get notified, closing the loop without extra ops overhead.

Takeaway: integrate capture, insight, and communication, then automate the glue work.

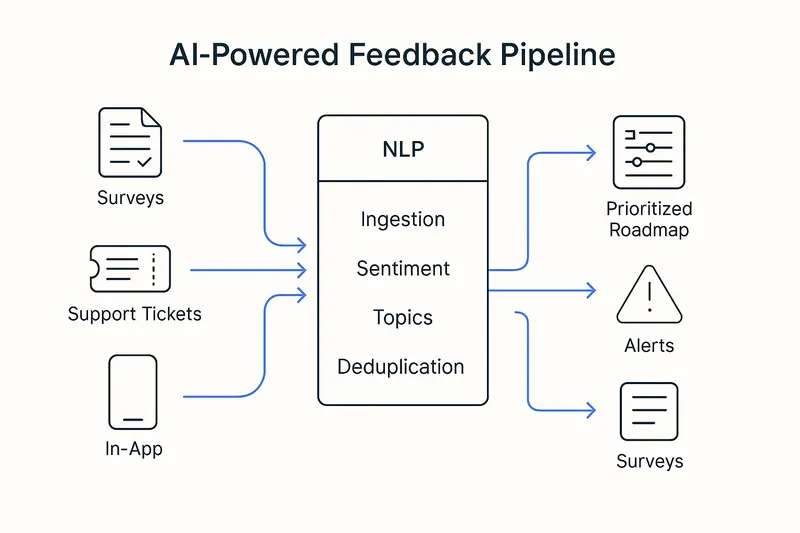

The architecture that makes it work

Modern stacks follow a simple path: ingest, understand, decide, act.

- Ingest

- Widgets, in‑app surveys, email forwarding, support ticket sync, social monitoring, APIs.

- Normalize structured and unstructured data into one schema for downstream models.

- Understand

- NLP pipeline: tokenization, lemmatization, part‑of‑speech, then models for intent, topics, sentiment.

- Semantic similarity embeds meaning as vectors, so “improve loading speed” matches “reduce lag.”

- Abstractive summaries condense long threads into crisp problem statements.

- Decide

- Scoring blends sentiment, frequency, and segment value to spotlight impact, not just votes.

- Deduplicate, merge, and link feedback to features or epics.

- Act

- Auto‑route bugs to support, feature themes to product, adoption risks to success.

- Notify stakeholders when thresholds trip, schedule follow‑up surveys.

Good references on methods and automation paths:

- https://www.zendesk.com/blog/ai-customer-feedback/

- https://www.unwrap.ai/post/customer-feedback-management

Takeaway: precision at each layer compounds into trustworthy outcomes.

What this looks like in practice

- Faster prioritization: move from “most requested” to “highest impact for target segments.” Teams report higher adoption and fewer orphaned features when decisions weigh sentiment and account value, not raw counts.

- Churn prevention: negative sentiment in tickets triggers save‑plays within hours, not weeks. Simple rule, big effect.

- CX fixes that matter: if 60% of complaints cluster around checkout, invest there first and measure NPS lift.

- Ops efficiency: auto‑tagging and routing trim triage time by more than half, freeing humans for tough cases.

Takeaway: the win is focus. Less time sorting, more time solving.

Quick FAQ for featured‑snippet clarity

-

What are AI feedback tools? AI feedback tools collect and analyze customer input across channels using NLP, sentiment, and automation to deliver prioritized, actionable insights.

-

How do they handle duplicates? They use semantic similarity to group differently worded requests that express the same need, then merge votes and context.

-

Are AI sentiment models accurate enough? On short, literal text they are strong. On sarcasm or domain slang, combine models with human review on high‑impact items. Balance speed with oversight.

Implementation playbook

Start small, wire deep, scale fast only when the loop works.

- Define the job: volume triage, fragmentation, or insight quality. Pick one.

- Map sources: support, CRM, analytics, communities, and review sites.

- Unify identities: attach segment and revenue context to each voice.

- Set rules: thresholds for alerts, merge criteria, and escalation paths.

- Close the loop: roadmap visibility and changelog updates tied to feedback IDs.

- Review cadence: weekly model checks, monthly taxonomy tune‑ups.

Takeaway: tools help, process wins.

Craft, not just compute

Data helps you hear. Judgment decides what to build. We favor clear writing, small scoped releases, and visible ownership. Ship fixes within 7 days when possible, explain tradeoffs in your changelog, and keep the board tidy. Detail is the brand.

What is next for AI in feedback

- Generative assistance that drafts release notes, executive briefs, and user replies.

- Multimodal analysis from text, audio, video, and session replay in one flow.

- Closed‑loop actions that trigger tickets, experiments, or outreach on their own.

For deeper dives into sentiment techniques and market shifts, explore:

- https://getthematic.com/insights/automated-sentiment-analysis

- https://www.zendesk.com/blog/ai-customer-feedback/

- https://www.unwrap.ai/post/customer-feedback-management

Takeaway: the stack is converging on faster loops with richer context. Quality still depends on human choices.